When it breaks, who pays?

May 4, 2026 · 5 min read · Vedran Lebo

Something breaks at 3am. An alert fires. Someone gets paged.

The next thirty minutes are not spent fixing the problem. They're spent figuring out what the problem actually is. Which server. Which service. When it last changed. What's different now compared to this morning. Who touched it. Whether the patch that ran yesterday could be related. Whether the firewall rule that was updated last week matters here.

You're not operating. You're investigating. And you're doing it with five different tools open, none of which talk to each other, all of which have slightly different ideas about the current state of your infrastructure.

This is the normal state of most server fleets. Not because the teams running them are careless. But because the tools available were never designed to give you a single coherent picture. They were designed to do one thing well and let you figure out the rest.

The cost of that gap is real. It just doesn't show up on an invoice.

The operator pays first

The person who pays most visibly is the one holding the pager. The sysadmin, the platform engineer, the SRE.

The immediate cost is time. Not time spent fixing things. Time spent establishing what's true. Every incident has a "figure out what's actually happening" phase before any remediation can begin. In a well-instrumented fleet, that phase is short. In a fleet running on four disconnected tools, it can be the majority of the incident window.

But the deeper cost is cognitive. When there's no single source of truth, the operator becomes the integration layer. They remember that the patch tool and the inventory tool disagree about which packages are installed on the database hosts. They know that the compliance scanner doesn't account for the custom kernel parameters set during last quarter's hardening push. They carry in their head the connections between systems that the systems themselves can't express.

That's an enormous amount of context to hold. And it means the organisation's operational knowledge is dangerously concentrated in a small number of people who are accumulating burnout quietly.

There's also a reputational cost, which is less discussed but very real. When something breaks and leadership asks what happened, the operator is the person in the room who either has an answer or doesn't. In a fragmented environment, the honest answer is often "we're still piecing together the timeline." That erodes confidence, even when the team is genuinely competent. The problem isn't skill. It's visibility.

The system pays second

Fragmented tooling creates structural blind spots that compound over time.

The most common is the inventory gap. A server gets provisioned. It ends up in the cloud console but not in the patch management tool. Or it gets added to the patch tool but never synced to the compliance scanner. These gaps are almost never discovered until something breaks. By then the host may have been sitting unpatched for months.

The second is traceability. When an incident requires you to answer "what changed on this host in the last 72 hours," you're assembling a timeline from multiple systems with different log formats, different retention policies, and different ideas about what counts as a change. The full picture is rarely available. Teams end up making decisions based on partial information and hoping they're not missing something important.

The third is scale. A fragmented approach works acceptably at 10 servers. At 50, the manual coordination between tools starts to hurt. At 200, it becomes the primary constraint on the team's ability to operate safely. The thing that worked when the fleet was small actively resists the growth of the fleet. Teams hit a ceiling not because their tools can't handle the load, but because the human coordination between the tools can't.

What gets expensive here is not a single incident. It's the slow accumulation of drift: configurations that changed and weren't tracked, patches that were applied inconsistently, rules that exist in one tool's model but not another's. The fleet gradually diverges from what anyone believes it to be.

The business pays last. And loudest.

The costs that end up in boardroom conversations are the ones the business pays. And they're usually the delayed consequence of everything above.

Security exposure accumulates when patches are applied inconsistently. A CVE is published. The patch is released. The team runs the update job. But because inventory is incomplete, three hosts don't get updated. Those hosts sit exposed for weeks or months before the next scan catches them. The exposure wasn't intentional. It was structural: a consequence of the gap between what the patch tool knew and what was actually running.

Compliance erosion is similar. A control is implemented. An auditor signs off. Two months later, a configuration drift means the control is no longer enforced on a subset of hosts. The next audit finds failures that the team genuinely didn't know about, because no one was watching that specific gap between systems. The remediation is frantic, manual, and documented poorly enough that it doesn't actually satisfy the auditor.

Tool sprawl is the cost that's easiest to put a number on. Most teams running fragmented fleets are paying for three to five overlapping subscriptions. A patch management tool. A separate compliance scanner. A CVE feed. An RMM for remote access. An inventory system. Each of these does its job in isolation. None of them knows what the others know. The combined cost in licenses and in senior engineer time spent bridging them is significant. And unlike a consolidated platform, it doesn't decrease as the fleet grows. It increases.

The common thread

All three of these cost categories have the same root cause: the fleet is not legible. There's no single place where you can ask a question about your infrastructure and get a reliable answer backed by current data.

"Which servers are missing this patch?" Requires cross-referencing the inventory with the patch history with the package data.

"Which hosts were changed in the last 24 hours?" Requires assembling logs from multiple systems.

"Are we compliant with CIS Level 2 right now?" Requires running a fresh scan, waiting, then manually reviewing the results.

These should be simple questions. In most fleets, they're not. And the cost of them being hard is paid continuously, in operator time, in system reliability, and in business risk, regardless of whether anything dramatic has gone wrong.

What a legible fleet looks like

A legible fleet is one where the infrastructure can answer questions about itself. Where current state is continuously collected, correlated, and available. Where the gap between "what should be true" and "what is actually true" is visible in real time, not discovered after the fact.

When an incident happens, you know within seconds which hosts are affected, what changed recently, and what their current configuration looks like. The investigation phase shrinks from thirty minutes to three. The operator isn't the integration layer. The platform is.

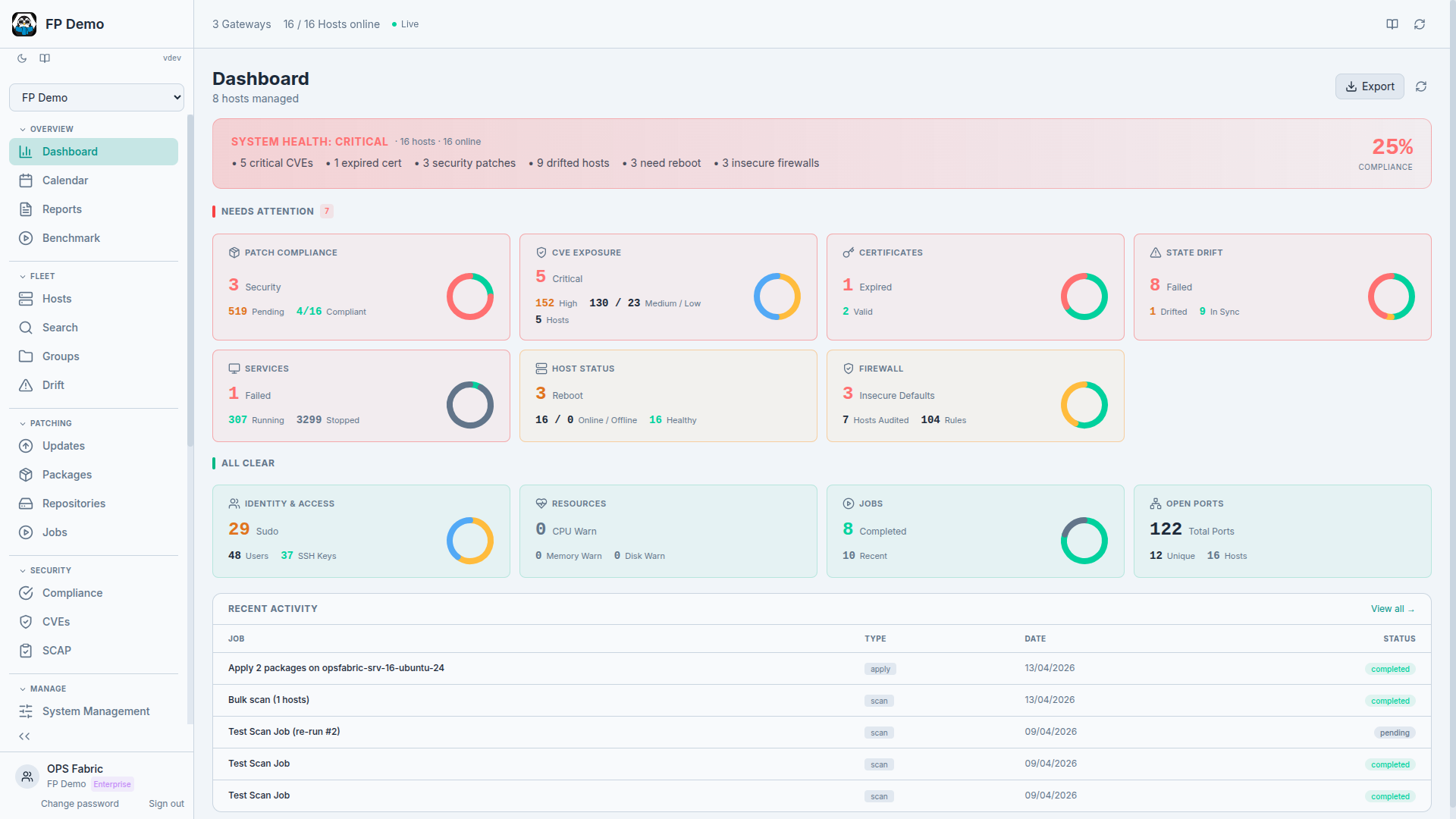

When a CVE drops, you don't run a manual query across four tools. You look at a single view that's already correlated the CVE against every installed package on every host. You see which hosts are affected, whether a fix is available, and you can kick off remediation from the same screen.

When an audit happens, you don't scramble to assemble evidence. The compliance posture is continuously tracked. The remediation history is already there. The report generates from data that was being collected anyway.

This is what OpsFabric is built to do. Not to replace every tool in your stack, but to be the layer that makes your fleet legible, so that the costs described above stop being a background tax on your operations and start being problems you can actually see and fix.

Related: What is infrastructure as data? The model behind fleet legibility, and why it matters more than any single feature.